Multiphysics Reduced-Order Model (ROM)

Theory and Physics

What is ROM?

Professor, are reduced-order models something that sacrifices accuracy for speed? When I hear "approximation," I can't help but imagine a loss of accuracy...

Good question. To put it simply, it is possible to reduce computation time to 1/1000 or less while maintaining almost the same accuracy. For example, suppose a single analysis of a 100,000-degree-of-freedom FEM model takes 30 minutes. With a ROM, this can be solved with just 20 to 50 degrees of freedom, producing results in 0.01 seconds.

What, from 100,000 to 50!? Can accuracy really be maintained after reducing it that much?

It can. Here's how it works. If you collect and examine dozens of full FEM results, you'll find that most of the variation can often be explained by a small number of "patterns." For example, even if you calculate 100 cases of thermal deformation in an automobile engine block, 99.9% of the variation in temperature distribution can be reproduced by superimposing about 10 basis patterns. Extracting these principal patterns is done by POD (Proper Orthogonal Decomposition), and solving the equations on these patterns is what ROM does.

I see, is it like JPEG compression for photos? Keeping the parts visible to humans and discarding the invisible parts...

Exactly! JPEG decomposes the frequency components of an image and keeps only the important ones, while ROM decomposes the variation of a physical field and keeps only the important modes. They are essentially the same idea. This technology is now essential for real-time response prediction in digital twins and is being implemented in tools like Ansys Twin Builder and Siemens Amesim.

Mathematics of POD (Proper Orthogonal Decomposition)

Please explain the mathematical mechanism of POD. What does "extracting patterns" mean concretely? How is it done?

First, solve the full-order model (FOM) $N_s$ times with different parameters or at different time steps, and create a snapshot matrix by arranging each solution vector $\mathbf{u}_i \in \mathbb{R}^N$ (where $N$ is the number of degrees of freedom) as columns:

Apply Singular Value Decomposition (SVD) to this:

Here, each column of $\mathbf{U} = [\boldsymbol{\phi}_1, \boldsymbol{\phi}_2, \dots]$ is a POD mode (basis vector), and the diagonal components $\sigma_1 \geq \sigma_2 \geq \dots$ of $\boldsymbol{\Sigma}$ are the singular values representing the "importance" of each mode.

So, the larger the singular value, the more important the mode. And where do you "cut it off"?

It's determined by the cumulative energy ratio. Define the cumulative contribution rate up to the top $r$ modes as:

and select the smallest $r$ that satisfies $E(r) \geq 0.999$ (99.9%). In practice, $r$ is typically around 10 to 100, which is overwhelmingly smaller than $N$ which can be 100,000 to 1,000,000. The matrix formed by arranging the top $r$ POD bases is $\boldsymbol{\Phi} = [\boldsymbol{\phi}_1, \dots, \boldsymbol{\phi}_r] \in \mathbb{R}^{N \times r}$, and the full-order solution is approximated as:

$\mathbf{a} \in \mathbb{R}^r$ are the reduced coordinates, and finding these is the job of the ROM.

Reduction via Galerkin Projection

After creating the basis with POD, how do you derive the reduced equations?

Suppose the semi-discretized full-order equation has the following form:

Substitute $\mathbf{u} = \boldsymbol{\Phi}\mathbf{a}$ into this, and multiply both sides from the left by $\boldsymbol{\Phi}^T$ (Galerkin projection):

The $N \times N$ system has been reduced to $r \times r$! $\tilde{\mathbf{M}}$, $\tilde{\mathbf{K}}$, $\tilde{\mathbf{f}}$ can be precomputed offline, so online you only need to solve a small system of equations.

Wow, so a 100,000 x 100,000 matrix becomes 50 x 50! That should be fast. But what about nonlinear cases?

Sharp question. When there is a nonlinear term $\mathbf{g}(\mathbf{u})$, projecting it requires evaluating $\boldsymbol{\Phi}^T \mathbf{g}(\boldsymbol{\Phi}\mathbf{a})$ at every step. Here, the computation of $\mathbf{g}$ itself depends on the full-order $N$, so it won't be faster as is. This is the core challenge of nonlinear ROM, which is solved by hyperreduction explained later.

Multiphysics ROM Formulation

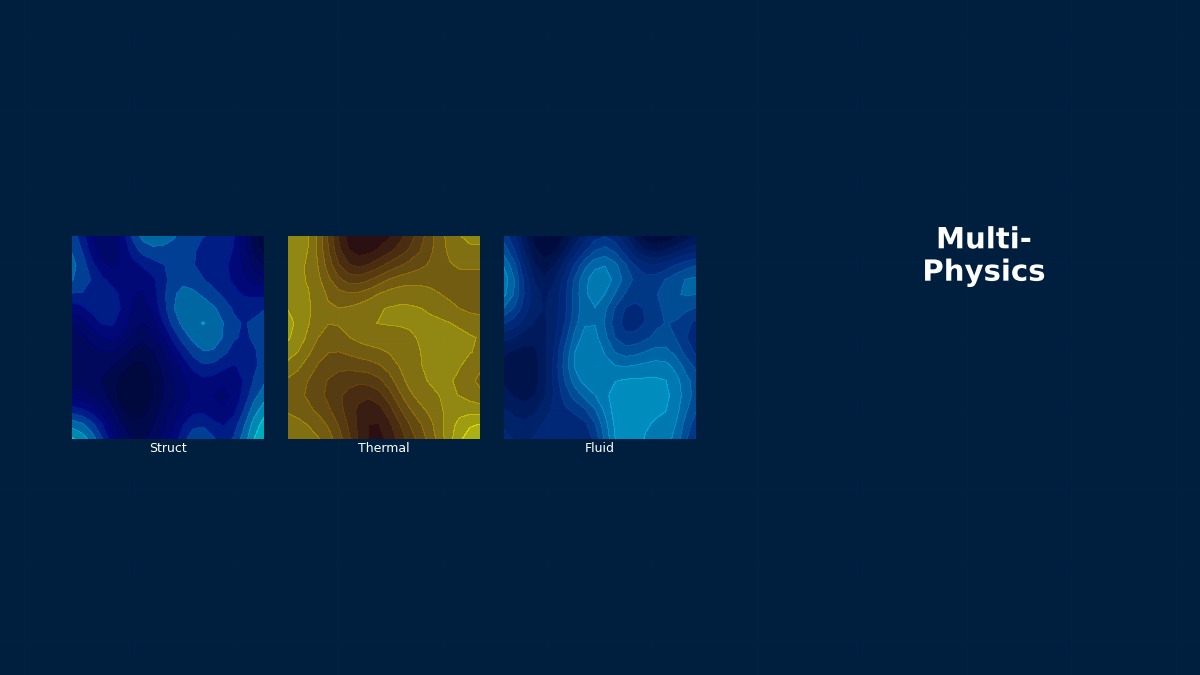

For multiphysics problems like structural-thermal coupling, how does ROM work? Do you create separate ROMs for each physics field?

There are three main approaches. Let's explain using a coupled problem of a structural field $\mathbf{u}_s$ and a temperature field $\mathbf{u}_t$:

1. Monolithic ROM: Perform POD on the combined state vector of all physics fields $\mathbf{u} = [\mathbf{u}_s^T, \mathbf{u}_t^T]^T$. Naturally captures coupling effects but has scaling issues (e.g., stress is on the order of MPa, temperature is on the order of K).

2. Separated ROM: Apply POD independently to each physics field and couple the respective ROMs at the interface. Easy to reuse existing ROM code, but requires attention to coupling stability.

3. Block-Diagonal ROM: A compromise that combines the POD bases of each physics field in a block-diagonal manner, projecting coupling terms as off-blocks. Most commonly used in practice.

I see, with the block-diagonal type, you can normalize each field even if the physical quantities have different scales, and still retain coupling. Which one is most common in practice?

In commercial tools, the block-diagonal type is mainstream. In Ansys Twin Builder, each physics FOM is individually reduced to a ROM and exported as an FMU (Functional Mock-up Unit), then coupled on a system model. This is essentially a form of separated ROM. COMSOL's "Model Reduction" feature also takes a block-diagonal approach.

Parametric ROM

Can you create a ROM that can still be used when design parameters (material constants, shape dimensions) change? It would be meaningless if you have to re-solve the full FEM every time...

That's Parametric ROM (pROM). It incorporates the dependence on parameters $\boldsymbol{\mu}$ (e.g., Young's modulus, plate thickness, inflow velocity) into the ROM. There are two basic strategies:

Global Basis Method: Sample the parameter space broadly, collect all snapshots, and apply POD. The basis includes parameter variations, so it can be used for new parameter values.

Interpolation Method: Build local ROMs for discrete parameter values and interpolate the ROM operators for intermediate parameters. However, simply interpolating ROM matrices can break positive definiteness, requiring geometric methods like interpolation on the Grassmann manifold.

So, for example, something like "instantly checking the stress distribution while varying plate thickness from 3mm to 5mm" becomes possible?

Yes. When evaluating 100 different parameter combinations in design exploration, full FEM would take 100 × 30 minutes = 50 hours, whereas with pROM it would take 100 × 0.01 seconds = 1 second. Cases are actually increasing where Crash ROM is used for thickness optimization in automotive body design.

Physical Meaning of Each Term

- POD mode $\boldsymbol{\phi}_i$: The "principal component" of the full-order solution. The first mode represents the direction of greatest variation, and higher-order modes capture finer variations. Unlike structural vibration eigenmodes, POD modes are data-driven and applicable to any physical field (temperature, pressure, displacement, etc.).

- Singular value $\sigma_i$: The square root of the "energy" of the corresponding mode. $\sigma_i^2$ is proportional to the contribution of that mode. The faster the singular values decay ($\sigma_i \sim i^{-p}$, $p > 1$), the higher the approximation accuracy possible with fewer modes. Decay is slow for convection-dominated flows and fast for diffusion-dominated problems.

- Galerkin projection $\boldsymbol{\Phi}^T(\cdot)$: The operation of orthogonally projecting the full-order residual onto the low-dimensional space. Uses the same test and basis functions (Bubnov-Galerkin). Guarantees the optimality condition that the residual is orthogonal to the column space of the POD basis.

- Reduced mass matrix $\tilde{\mathbf{M}}$: If the POD basis is orthonormal, then $\tilde{\mathbf{M}} = \mathbf{I}$ (identity matrix), further simplifying computation.

Assumptions and Applicability Limits

- POD bases are only valid within the range of the training data. Extrapolation to parameters outside the training range may cause rapid degradation in accuracy.

- For convection-dominated problems (e.g., high Reynolds number flow), POD mode decay is slow, requiring many modes for sufficient accuracy.

- For strong nonlinearities (contact, fracture, phase transformation, etc.), the stability of Galerkin projection is not guaranteed.

- In multiphysics ROM, inappropriate scaling of each physics field can bias the POD towards one field.

- Problems involving moving boundaries or topology changes are difficult to reduce to ROM as-is (require special basis updates).

Dimensional Analysis and Typical Reduction Ratios

| Problem Type | FOM DOF $N$ | ROM DOF $r$ | Reduction Ratio $N/r$ | Typical Accuracy (RMSE) |

|---|---|---|---|---|

| Structural-Thermal Coupling | $10^5$ | 20–50 | 2,000–5,000 | < 0.5% |

| FSI (Fluid-Structure Interaction) | $10^6$ | 50–200 | 5,000–20,000 | 1–3% |

| Electromagnetic-Thermal Coupling | $10^5$ | 15–40 | 2,500–7,000 | < 1% |

| Unsteady Fluid | $10^6$ | 100–500 | 2,000–10,000 | 2–5% |

The Surprising Origin of POD — A Universal Tool Born from Turbulence Research

The prototype of POD dates back to the expansion theorem for stochastic processes (Karhunen-Loeve expansion) independently proposed by Karhunen and Loeve in 1943. Lumley (1967) introduced it to fluid dynamics with the aim of extracting "coherent structures" in turbulent fields. At the time, limited computer performance allowed application only to limited data, but in the 2000s, the combination of large-scale FEM and advances in SVD algorithms led to its explosive proliferation as a standard method for ROM construction. Interestingly, this method is mathematically essentially the same as Principal Component Analysis (PCA) for image compression, KLT in signal processing, and SVD in statistics. It's a great example in mathematics where the same idea has been "rediscovered" multiple times across different fields.

Numerical Methods and Implementation

Snapshot Collection Strategy

I heard that ROM accuracy depends heavily on snapshot quality. How can we collect snapshots efficiently?

There are three main strategies:

1. Uniform Sampling: Divide the parameter space into a grid and run FOM at each point. Simple, but suffers from combinatorial explosion (curse of dimensionality) as the number of parameters increases. Practical for 2–3 parameters.

2. Latin Hypercube Sampling (LHS): Quasi-random sampling that efficiently covers the parameter space. Achieves comparable coverage with fewer samples than uniform sampling. Most commonly used in practice.

3. Greedy Method (Adaptive Sampling): Sequentially adds the parameter point where the ROM error estimate is maximum. Most efficient but requires implementation of an error estimator.

For multiphysics, do you ever sample separately for structure and heat?

If the coupling is weak, you can sometimes take snapshots independently for each physics field, but for strong coupling, you must take snapshots from the coupled FOM. For example, in thermal-structural coupling of a circuit board, temperature distribution and warpage deformation are closely related, so learning the variation pattern of only one side cannot reproduce the coupling effect.

Hyperreduction

You mentioned earlier that evaluating nonlinear terms is a bottleneck. How does hyperreduction work?

It's a core technology for nonlinear ROM. Let me introduce two representative methods:

DEIM (Discrete Empirical Interpolation Method): Approximates the nonlinear term $\mathbf{g}(\mathbf{u})$ itself with a POD basis and evaluates $\mathbf{g}$ only at a few "magic points" (interpolation points). Eliminates the need to scan all elements, reducing computational cost from $O(N)$ to $O(m)$ ($m \ll N$).

Here, $\boldsymbol{\Psi}$ is the POD basis for the nonlinear term, and $\mathbf{P}$ is the selection matrix for the magic points.

ECSW (Energy-Conserving Sampling and Weighting): Optimizes a subset of elements and weights to approximate the Galerkin-projected energy with a few elements. Characterized by preserving the energy conservation property of Galerkin projection, offering high stability for structural nonlinearities (large deformation, contact).

Both are based on the idea that "you can reconstruct the whole from a few representative points without computing all elements." In practice, DEIM is often used for fluid systems and ECSW for structural systems.

Data-Driven ROM

I've also heard about ROMs using machine learning lately. How are they different from physics-based ROMs?

Related Topics

なった

詳しく

報告