Polynomial Chaos Expansion (PCE) for Uncertainty Quantification

Theory and Physics

Overview of PCE

Professor, I heard that Polynomial Chaos Expansion (PCE) is more efficient than the Monte Carlo method, is that true?

If the input dimension is low—roughly 5 to 10 or fewer—it's overwhelmingly more efficient. For example, consider a car crash analysis where the Young's modulus of the material, plate thickness, and friction coefficient are treated as three uncertain parameters. With the Monte Carlo method, you'd need to run the FEM about 10,000 times to stabilize the statistics. But with PCE, you can achieve equal or better accuracy with only 50 to 100 FEM runs.

What? You mean it can be done with 1/100th the computational cost? How does that work?

The key idea is "approximating the response surface with polynomials." We represent the uncertainty of the input parameters as random variables $\boldsymbol{\xi}$ and expand the output quantity $Y$ as a linear combination of orthogonal polynomials like Hermite or Legendre. Once this approximation (surrogate model) is built, we can compute the mean, variance, probability density function, and sensitivity indices analytically from it. No additional simulations are needed.

I see! So while Monte Carlo is like "rolling dice endlessly," PCE is about "creating a smart function approximation"!

Exactly. However, there are caveats. When the number of input parameters exceeds 20, the number of polynomial terms explodes and efficiency drops. This is called the "curse of dimensionality." We'll discuss this in detail later, but to overcome this weakness, sparse PCE and adaptive methods are being researched.

Selection of Orthogonal Polynomial Basis

You mentioned several polynomial names like Hermite and Legendre. How do you choose which one to use?

This is actually a crucial point related to the foundation of PCE. The optimal orthogonal polynomial family is determined according to the probability distribution of the input variable—this is called the "Wiener-Askey correspondence."

| Input Probability Distribution | Optimal Orthogonal Polynomial | Domain | Typical Application |

|---|---|---|---|

| Normal (Gaussian) Distribution | Hermite Polynomial | $(-\infty, +\infty)$ | Variation in material properties |

| Uniform Distribution | Legendre Polynomial | $[-1, 1]$ | Design parameter range specification |

| Beta Distribution | Jacobi Polynomial | $[-1, 1]$ | Constrained parameters |

| Gamma Distribution | Laguerre Polynomial | $[0, +\infty)$ | Positive-valued load distributions |

| Poisson Distribution | Charlier Polynomial | $\{0, 1, 2, \ldots\}$ | Discrete event counts |

So there's a "compatible polynomial" determined for each distribution. What happens if you use the wrong combination?

Convergence slows down, or in the worst case, it diverges. For example, using Hermite polynomials for a uniform distribution input might require 10th order or more for a problem that would normally be sufficient with 3rd order. In practice, we combine appropriate polynomials for each parameter via tensor products, like "Hermite for Young's modulus because it's normally distributed" and "Legendre for plate thickness tolerance because it's uniformly distributed."

Governing Equations and Expansion Formula

Can you show me the basic PCE formula?

The PCE expansion for an output $Y$ with respect to $d$ random variables $\boldsymbol{\xi} = (\xi_1, \ldots, \xi_d)$ is as follows:

Here, the meaning of each symbol is:

- $c_{\boldsymbol{\alpha}}$ — PCE coefficients (the unknowns we want to find)

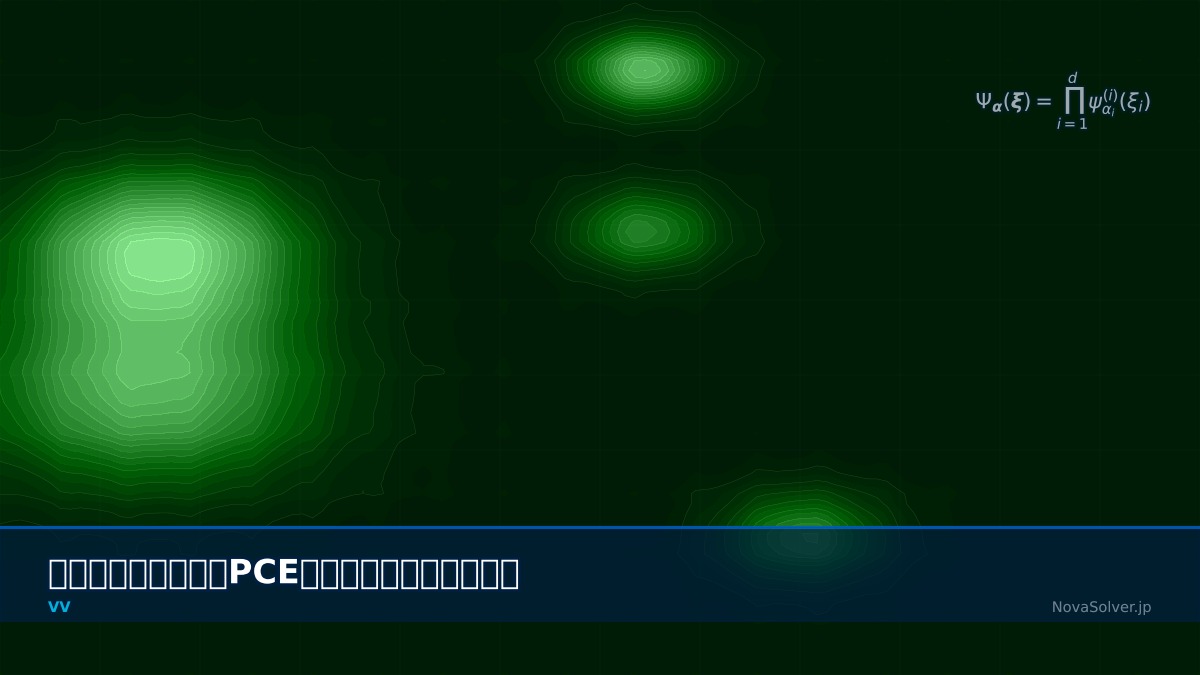

- $\Psi_{\boldsymbol{\alpha}}(\boldsymbol{\xi})$ — Multivariate orthogonal polynomial basis

- $\boldsymbol{\alpha} = (\alpha_1, \ldots, \alpha_d)$ — Multi-index (polynomial degree for each variable)

- $\mathcal{A}$ — Index set after truncation

How do you actually construct multivariate orthogonal polynomials?

They are constructed as the tensor product of univariate orthogonal polynomials for each variable:

For example, if $d=2$, $\xi_1$ is normally distributed (→Hermite $He_k$), and $\xi_2$ is uniformly distributed (→Legendre $P_l$), then $\Psi_{(2,1)}(\boldsymbol{\xi}) = He_2(\xi_1) \cdot P_1(\xi_2)$. Orthogonality means the inner product is zero:

Because of the orthogonality, you can determine each coefficient independently, just like Fourier series!

Exactly right. Thinking of the analogy with Fourier series makes it easier to understand. Fourier series expands periodic functions with trigonometric functions (orthogonal basis). PCE expands stochastic responses with orthogonal polynomials. The principle is exactly the same.

Deriving Statistics from Coefficients

Once you have the PCE coefficients, how do you calculate the mean and variance?

This is the most beautiful part of PCE. Thanks to orthogonality, statistics can be obtained directly from the coefficients:

Mean (the zeroth-order coefficient is the mean itself):

Variance (sum of squares of coefficients of order 1 and above):

What? You get the variance just by summing the squares of the coefficients? You don't need to sample tens of thousands of times like Monte Carlo!

Furthermore, skewness, kurtosis, and even the probability density function can be obtained analytically. For example, to get the probability density function, you apply Monte Carlo sampling to the PCE approximation $Y(\boldsymbol{\xi})$, but this is polynomial evaluation, not FEM, so it finishes in an instant. Even 100,000 samples are on the order of milliseconds.

Derivation of Sobol Sensitivity Indices

I heard you can also do sensitivity analysis from PCE?

This is arguably PCE's killer feature. Sobol sensitivity indices can be calculated directly from the PCE coefficients. The first-order Sobol index for the $i$-th variable is:

Here, $\mathcal{A}_i$ is the set of "terms that depend only on $\xi_i$" (i.e., $\alpha_j = 0 \; \forall j \neq i$ and $\alpha_i > 0$). The total Sobol index $S_i^T$ is similarly obtained from the coefficients of "all terms involving $\xi_i$."

Can you give me a concrete example? For a 3-parameter problem, for instance?

Good question. For example, in predicting residual stress in a weld, let's say the input parameters are heat input $Q$, welding speed $v$, and preheat temperature $T_0$. After performing PCE expansion and obtaining the coefficients, if the results are $S_Q = 0.65$, $S_v = 0.22$, $S_{T_0} = 0.08$, then you know that 65% of the variation in residual stress is due to uncertainty in heat input. This directly leads to design decisions like "heat input management should be the top priority."

Physical Meaning of Each Term

- PCE coefficient $c_{\boldsymbol{\alpha}}$: Represents the contribution of each polynomial basis to the output variation. Terms with large coefficients dominate the output uncertainty.

- Orthogonal polynomial basis $\Psi_{\boldsymbol{\alpha}}$: Corresponds to "basis vectors" in the input probability space. Orthogonality allows quantifying the contribution of each basis independently.

- Truncation order $p$: A parameter controlling the accuracy of the expansion. Higher order increases accuracy but also increases the number of terms and computational cost. In practice, $p=2\sim5$ is common.

Calculation of Number of Expansion Terms

When there are $d$ input variables and a total order truncation $p$, the number of expansion terms $P$ is:

$$ P = \binom{d+p}{p} = \frac{(d+p)!}{d! \, p!} $$| Input Dimension $d$ | Order $p=2$ | Order $p=3$ | Order $p=4$ |

|---|---|---|---|

| 3 | 10 | 20 | 35 |

| 5 | 21 | 56 | 126 |

| 10 | 66 | 286 | 1,001 |

| 20 | 231 | 1,771 | 10,626 |

Numerical Methods and Implementation

Galerkin Projection Method (Intrusive PCE)

What methods are there to find the PCE coefficients?

There are two main methods. First, let's explain the Galerkin projection method (intrusive PCE). Using orthogonality, the PCE coefficients $c_{\boldsymbol{\alpha}}$ are found via inner product:

It's elegant in theory, but how do you actually compute it? You need FEM results to compute the expectation, right?

Sharp observation. In the Galerkin projection method, you need to substitute the PCE expansion into the governing equations and modify the solver itself. For example, for the structural analysis stiffness equation $[K(\boldsymbol{\xi})]\{u(\boldsymbol{\xi})\} = \{F(\boldsymbol{\xi})\}$, you expand $K$, $u$, and $F$ with PCE and derive a system of equations via projection onto each basis. Theoretically, this is the most accurate, but it requires rewriting the internals of existing FEM solvers, making it difficult to use in practice. This is why it's called "intrusive."

Collocation/Regression Method (Non-Intrusive PCE)

Isn't there a method that doesn't require modifying the solver?

Yes, there is. The overwhelmingly used method in practice is Non-Intrusive PCE. It treats existing FEM solvers as black boxes. The procedure is:

- Generate $N$ sample points $\{\boldsymbol{\xi}^{(1)}, \ldots, \boldsymbol{\xi}^{(N)}\}$

- Run FEM at each sample point and obtain outputs $\{Y^{(1)}, \ldots, Y^{(N)}\}$

- Estimate coefficients via least squares

In matrix form, it's $\hat{\mathbf{c}} = (\boldsymbol{\Psi}^T \boldsymbol{\Psi})^{-1} \boldsymbol{\Psi}^T \mathbf{Y}$. Here, $\boldsymbol{\Psi}$ is the $N \times P$ design matrix ($\Psi_{k,\boldsymbol{\alpha}} = \Psi_{\boldsymbol{\alpha}}(\boldsymbol{\xi}^{(k)})$). This works with any solver output, whether it's Abaqus, Ansys, or OpenFOAM.

What's the minimum $N$ required?

The minimum condition is $N \geq P$ (samples greater than or equal to the number of terms), but in practice, an oversampling ratio of 2 to 3 times is recommended. That is, $N = 2P \sim 3P$. For example, if $d=5$, $p=3$, then $P=56$ terms, so $N = 112 \sim 168$ FEM runs are needed.

Selection of Numerical Quadrature Rules

Can you choose sample points randomly? Or are there specific rules?

Good question. The choice of sample points (design of experiments) directly affects PCE accuracy. Let's compare the main methods:

| Sampling Method | Characteristics | Required Sample Count | Recommended Scenario |

|---|---|---|---|

| Tensor Product Quadrature | Place Gauss points independently on each axis | $(p+1)^d$ (exponential increase) | Low dimension $d \leq 3$ |

| Smolyak Sparse Grid | "Thinned-out" version of tensor product | Significantly reduced | $d = 4 \sim 15$ |

| Latin Hypercube | Space-filling quasi-random sampling | Freely settable | Regression-based PCE |

| Sobol Sequence | Quasi-random numbers via low-discrepancy sequence | Freely settable | High-dimensional regression |

With tensor product, for 5 dimensions $(p+1)^5$ ... for order 4, that's $5^5 = 3125$ FEM runs needed. That's not realistic!

Related Topics

なった

詳しく

報告