Monolithic (Integrated) CHT Solution

Theory and Physics

Fundamental Concepts of Monolithic CHT

What is the fundamental difference between "Monolithic CHT" and the conventional "Segregated (separated)" approach? Is it just that the solvers solve them together?

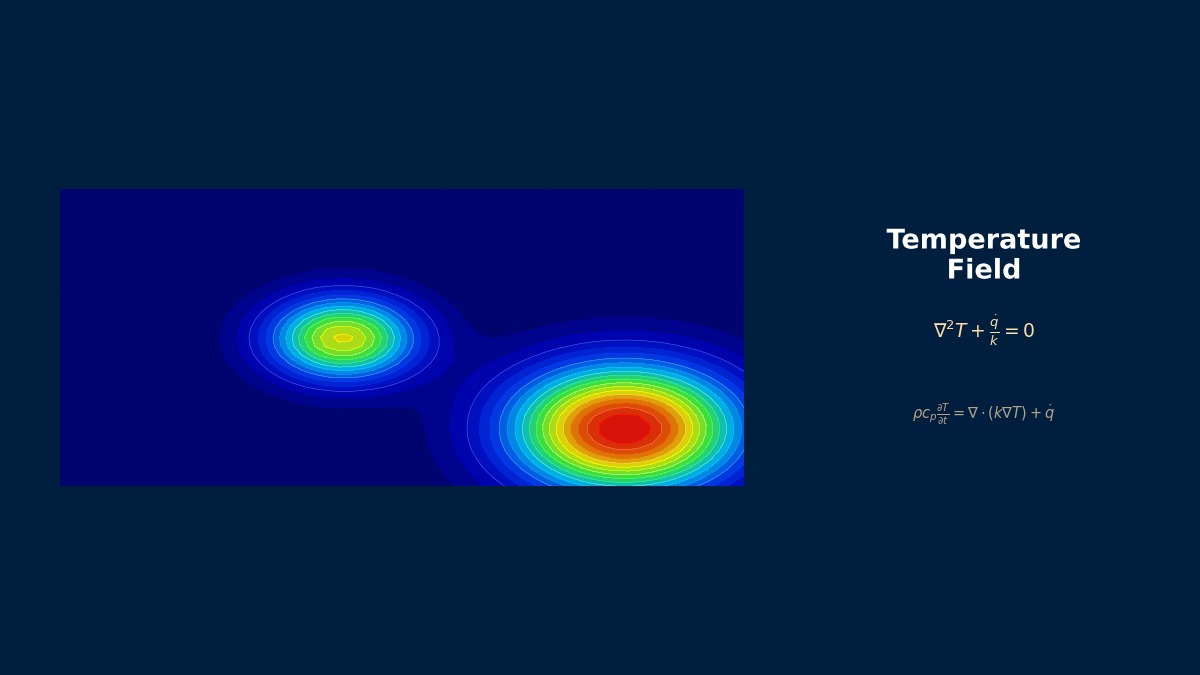

The fundamental difference lies in the stage of discretization and solution of the governing equations. The segregated method solves the fluid and solid equations alternately with separate solvers, exchanging data at the boundaries. In contrast, the monolithic method formulates them as a single system of equations from the outset. For example, it directly incorporates the unsteady heat conduction and fluid energy equations, with the continuity conditions of temperature and heat flux at the interface as internal boundary conditions. This inherently mitigates issues like iterative convergence delay at the interface and instability with small time steps.

What does it mean concretely to write the governing equations as "one system"? The variables used for fluid and solid should be different, right?

Good question. The variables are unified. In many implementations, "temperature" is adopted as the primary variable across the entire computational domain (fluid+solid). In the fluid domain, the energy equation is solved in temperature form alongside the Navier-Stokes and continuity equations. In the solid domain, the heat conduction equation is solved. When these are assembled into a single matrix, it results in a block structure like this:

How is the "continuity of heat flux" at the interface enforced within the matrix? Does it require special treatment?

Yes, it is realized by appropriately handling the weak form boundary integral terms during the discretization of interface elements (e.g., elements straddling fluid and solid). Specifically, the condition on the interface Gamma,

Numerical Methods and Implementation

Discretization and Solver Strategy

How do you efficiently solve that large block matrix? I think the matrix properties (asymmetric vs symmetric) are also different for fluid and solid.

That is precisely the key to implementation. The global matrix becomes asymmetric and large-scale. Direct methods are impractical, so a combination of Krylov subspace methods (e.g., GMRES) and preconditioning is essential. As specific preconditioners, incomplete LU decomposition (ILU) for the fluid block

For transient analysis, how is time integration handled? I feel the optimal time step size would be completely different for fluid and solid.

That is one of the major advantages of the monolithic method. Because it is treated as a single system of equations, implicit integration methods (e.g., Backward Differentiation Formula BDF2) can be applied to the whole system. This allows selecting a time step based on the physical phenomena, not constrained by the fluid CFL condition or the solid Fourier number. For example, in thermal response analysis of automotive engine components, it is necessary to handle the combustion cycle (a few ms) and the block's thermal soak (several seconds) simultaneously. With the monolithic method, you can set the time step to around 1ms to 10ms and solve stably. Abaqus/Standard's conjugate heat transfer analysis also employs similar implicit time integration.

Does the mesh need to be matching (conformal) at the interface? With non-matching meshes, can that coupling term

Matching meshes are not strictly necessary. To handle non-matching meshes, "mapping" techniques are used, where during integration at the interface, temperature or heat flux at nodes on one mesh (e.g., fluid side) is interpolated using shape functions from the other mesh (solid side). This means

Practical Guide

Workflow and Verification

When starting an analysis with monolithic CHT, what should be checked/set first?

First, check the "order of magnitude of material properties". Especially thermal conductivity and volumetric heat capacity. Air's thermal conductivity is about 0.026 W/mK, while steel is about 50 W/mK—a difference of over three orders of magnitude. In the monolithic method, these enter directly into the matrix, worsening the condition number and potentially causing solver divergence. Countermeasures include performing non-dimensionalization or enabling the software's scaling options. When performing CHT in ANSYS Mechanical APDL, you must strictly check the consistency of the unit system when inputting properties with the `MP` command.

How can I verify the analysis results to say they are reliable? Just looking at the temperature distribution isn't enough, right?

Exactly. At a minimum, quantitatively check the following two points. 1. **Interface Heat Flux Balance**: Does the net heat flux from fluid to solid and the heat flux conducted within the solid satisfy the conservation law in steady state? For example, verify that the difference in integrated heat flux at the interface is within 1% of the total input heat. 2. **Temperature History at Representative Points**: For transient analysis, does the temperature rise curve at a specific point inside the solid qualitatively match that from a simplified 1D heat conduction analysis (e.g., with a fixed heat transfer coefficient)? In practice, comparison with experimental data specified in standards like JIS B 8622 (General rules for cooling tower performance testing) or SAE standards (e.g., related to engine cooling) is the gold standard.

For mesh dependency study, do you do it separately for fluid and solid? Or all together including the interface?

It should be done all together, including the interface. This is because the accuracy of the monolithic method strongly depends on the mesh resolution near the interface. The specific procedure is: first, appropriately generate boundary layer meshes (fluid side) and meshes considering the thermal penetration depth in the solid. Then, systematically refine the overall mesh size (e.g., base size 1.0, 0.7, 0.5 times) and confirm that the change in monitored quantities (e.g., average heat transfer coefficient at the interface or maximum solid temperature) converges within, say, 2%. Altair AcuSolve's tutorial recommends maintaining the element aspect ratio near the interface below 5 as a mesh dependency verification for CHT.

Software Comparison

Implementation and Features of Each Solver

Which software advertises "Monolithic CHT"? Also, how can I tell if software is just doing segregated internally?

Those implementing a true monolithic solution are currently limited. COMSOL Multiphysics is inherently monolithic because it describes fluid and solid equations as one system via its "Coefficient Form PDE" interface. On the other hand, ANSYS Fluent's "Coupled Thermal Boundary Condition" can be considered "partially monolithic" as it couples only the energy equation at the interface, solving it separately from the momentum equations. A way to tell is to check the solver output log: see if the fluid and solid temperature fields are updated in "one linear solver call" or as separate "outer/inner iterations".

What about free/open-source software? Is OpenFOAM's `chtMultiRegionFoam` monolithic?

OpenFOAM's `chtMultiRegionFoam` is, by default, a **segregated method**. It calls the fluid solver (`buoyantSimpleFoam`, etc.) and the solid solver (`solidTemperature`) separately, running an "outer iteration" loop that exchanges temperature and heat flux at the interface. However, experimental implementations that solve the energy equation part monolithically exist in development versions or derived solvers. For a complete monolithic method, you would likely need to implement custom elements in open-source finite element-based codes like `fe40` or `CalculiX`.

Abaqus and ANSYS Mechanical specialize in structural heat conduction, but can they do CHT with fluid?

By themselves, they operate via "coupling with external CFD software". Abaqus/Standard has a "Conjugate Heat Transfer Analysis" function, but this handles heat conduction between multiple solid domains and does not include fluid. To perform CHT involving fluid, you would use Abaqus CFD (formerly linked with Dassault Systèmes' `PowerFLOW`) or, in ANSYS's case, use `System Coupling` to bidirectionally couple Fluent (fluid) and Mechanical (solid). This is a segregated method between software, with the drawback of high computational cost and data transfer overhead.

Troubleshooting

Countermeasures for Divergence and Errors

The analysis diverges abruptly in the first few steps. There's no obvious error in material properties or boundary conditions.

The most common cause is "initial field mismatch". Especially if the solid's initial temperature is set to a uniform room temperature (20°C), an extreme temperature gradient occurs at the interface with the incoming high-temperature fluid (e.g., 500°C). This can lead to a physically unrealistic, huge heat flux calculated in the first time step, causing the solver to fail. Countermeasures are: set the solid's initial temperature close to the fluid inflow temperature, or apply a "ramp function" that starts the physical field very slowly using extremely small time steps (e.g., 1e-6 seconds) for the first few tens of steps. Using Siemens Star-CCM+'s `Field Function`, such initialization profiles can be easily set.

In transient analysis, the interface temperature "oscillates". It doesn't improve even if I make the time step finer.

This is a numerical "stiffness" problem that occurs when the thermal impedances (ratio of thermal diffusivities) of fluid and solid are extremely different. It's manageable for air (thermal diffusivity α ≈ 2e-5 m²/s) and copper (α ≈ 1e-4 m²/s), but becomes pronounced for air and resin (α ≈ 1e-7 m²/s). There are two countermeasures. 1) Change the time integration scheme to a more stable one (e.g., from BDF1 to BDF2). 2) Tighten the solver convergence criteria. Particularly in COMSOL, reducing the "relative tolerance" from the default 0.01 to below 0.001 often suppresses this oscillation. Fundamentally, the mesh near the interface needs to be finer on the side of the material with lower thermal diffusivity (= poorer heat conduction).

In steady-state analysis, the residuals drop, but the interface heat flux does not converge to a constant value. Why?

That phenomenon indicates the solver is reducing the "local" residuals of mass, momentum, and energy conservation, but the "global" heat balance has not been achieved. Two main causes are possible. 1) **Relaxation factors are too strong**: Especially if strong under-relaxation is applied to the solid heat conduction equation, the update amounts become too small, requiring a vast number of iterations to reach overall thermal equilibrium. 2) **Boundary condition leakage**: The external insulation boundary for the solid is not perfect, or the heat flux at the fluid outflow boundary is not zero (e.g., convective outflow). As a countermeasure, monitor the global heat balance (inflow heat = internal heat generation in solid + losses from the system) and continue the calculation until this converges. In ANSYS Fluent, you can monitor integrated heat flux on boundaries using `Surface Monitor`.

When I used a non-matching mesh, the temperature distribution at the interface became "jagged". Is this within acceptable error?

That is a typical symptom of "numerical heat flux leakage" due to mapping error. It is not acceptable. When using non-matching meshes, check the following points. 1. **Mapping method**: Simple nearest-neighbor interpolation is insufficient; at least linear interpolation, ideally a projection method guaranteeing conservation, should be used. 2. **Mesh size ratio**: If the ratio of element size on the coarse mesh side to that on the fine mesh side exceeds 5, the error becomes significant. Try to keep it within 2 times if possible. 3. **Interface mesh quality**: If the aspect ratio of the boundary layer mesh on the fluid side is too large, the interpolation accuracy for the temperature gradient normal to the interface drops. When using GGI in OpenFOAM, tightening parameters like `tolerance` or `relTol` (to around 1e-6) can sometimes improve it. The fundamental solution is to increase the mesh resolution near the interface to comparable levels on both sides.

Related Topics

なった

詳しく

報告