Mesh Quality Prediction Model

Overview

先生! 今日はメッシュ品質予測モデルの話なんですよね? どんなものなんですか?

Theory and Physics

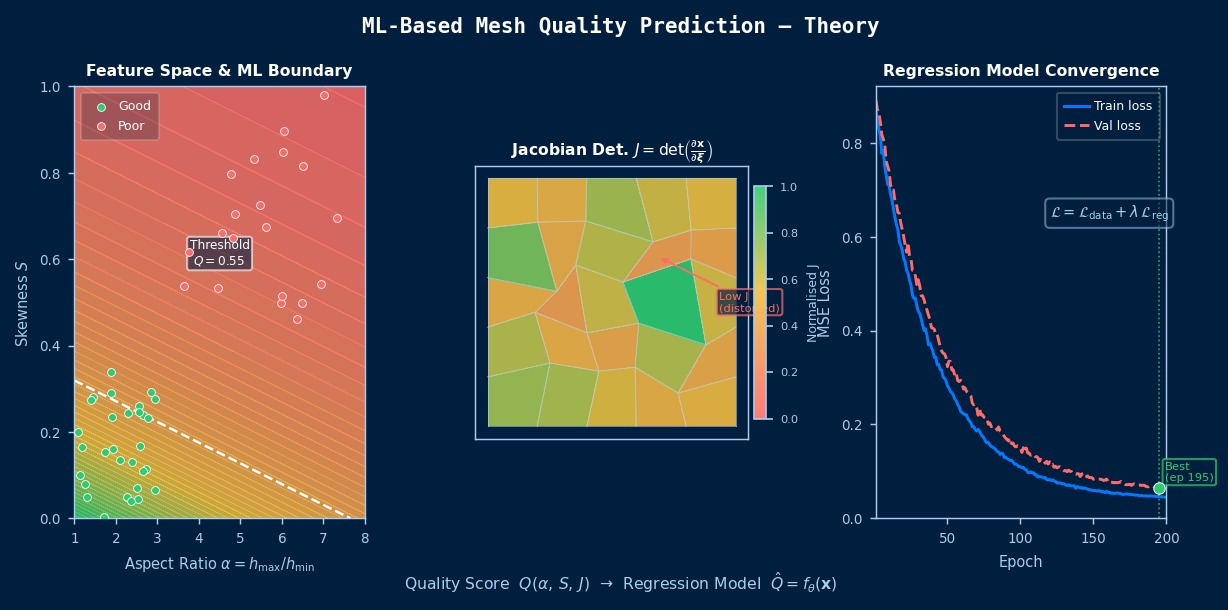

A method to predict mesh quality metrics (such as aspect ratio, skewness, etc.) in advance using ML to prevent the generation of poor-quality meshes. It performs automatic detection of quality defects and provides correction suggestions.

Governing Equations

Expressing this as an equation looks like this.

Hmm, just the equation alone doesn't really click for me... What does it represent?

Aspect Ratio and Skewness:

Your explanation is easy to understand! My confusion about aspect ratio and skewness has cleared up.

Theoretical Foundation

I've heard of "theoretical foundation," but I might not fully understand it...

The mesh quality prediction model is an important method aiming for the fusion of data-driven approaches and physics-based modeling. While computational cost is a major bottleneck in conventional CAE analysis, introducing a mesh quality prediction model can significantly improve the trade-off between computational efficiency and prediction accuracy. The mathematical foundation of this method is based on function approximation theory and statistical learning theory, with theoretical research challenges including guarantees of generalization performance and rigorous analysis of convergence. Particularly, dealing with the "curse of dimensionality" when the input dimension is high is a key practical issue, and approaches like dimensionality reduction and leveraging sparsity are important.

Application Conditions and Assumptions

Professor, please tell me about "Application Conditions and Assumptions"!

When using this method, it is necessary to consider in advance the quality and quantity of input data, the range where the model's assumptions hold, and computational resource constraints. Verification against known analytical solutions or benchmark problems is essential for confirming physical validity. Based on theoretical error evaluation, appropriately judge the balance between required accuracy and computational cost.

Details of Mathematical Formulation

Next is "Details of Mathematical Formulation"! What kind of content is this?

Shows the basic mathematical framework for applying machine learning models to CAE.

Loss Function Composition

What does "loss function composition" specifically mean?

The loss function in AI×CAE is composed as a weighted sum of a data-driven term and a physics constraint term:

Here, $\mathcal{L}_{\text{data}}$ is the squared error with observed data, $\mathcal{L}_{\text{physics}}$ is the residual of the governing equations, and $\mathcal{L}_{\text{reg}}$ is the regularization term. Adjusting the weight parameters $\lambda$ greatly affects learning stability and accuracy.

Generalization Performance and Extrapolation Problem

Please tell me about "Generalization Performance and the Extrapolation Problem"!

The biggest challenge for surrogate models is prediction accuracy outside the range of training data (extrapolation region). Incorporating physical laws can improve extrapolation performance, but complete guarantees are difficult.

Curse of Dimensionality

Please tell me about the "Curse of Dimensionality"!

When the dimension of the input parameter space is high, the required number of samples increases exponentially. Efficient sample placement through Active Learning or Latin Hypercube Sampling (LHS) is extremely important.

Assumption Conditions and Application Limits

If you use it without knowing the prerequisites, what kind of failures can occur?

- The training data sufficiently represents the physics of the analysis target.

- The relationship between input parameters and output is smooth (if there are discontinuities, domain partitioning is necessary).

- Reducing computational cost is the main objective, and conventional solvers should be used in conjunction for final verification requiring high accuracy.

- If the quality of training data (mesh-converged, V&V completed) is insufficient, the model's reliability decreases.

Ah, I see! So that's how the mechanism of training data representing the analysis target works.

Dimensionless Parameters and Dominant Scales

I've heard of "Dimensionless Parameters and Dominant Scales," but I might not fully understand it...

Understanding the dimensionless parameters governing the physical phenomenon being analyzed is the foundation for appropriate model selection and parameter setting.

- Peclet Number Pe: Relative importance of convection and diffusion. Pe >> 1 indicates convection-dominated (stabilization methods required).

- Reynolds Number Re: Ratio of inertial forces to viscous forces. A fundamental parameter for fluid problems.

- Biot Number Bi: Ratio of internal conduction to surface convection. For Bi < 0.1, the lumped capacitance method can be applied.

- Courant Number CFL: Indicator of numerical stability. For explicit methods, CFL ≤ 1 is required.

Ah, I see! So that's how the mechanism of the physical phenomenon being analyzed works.

Verification via Dimensional Analysis

Please tell me about "Verification via Dimensional Analysis"!

Dimensional analysis based on Buckingham's Π theorem is effective for order-of-magnitude estimation of analysis results. Using characteristic length $L$, characteristic velocity $U$, and characteristic time $T = L/U$, estimate the order of each physical quantity in advance to confirm the validity of the analysis results.

Classification of Boundary Conditions and Mathematical Characteristics

I've heard that if you get the boundary conditions wrong, everything fails...

| Type | Mathematical Expression | Physical Meaning | Example |

|---|---|---|---|

| Dirichlet Condition | $u = u_0$ on $\Gamma_D$ | Specification of variable value | Fixed wall, specified temperature |

| Neumann Condition | $\partial u/\partial n = g$ on $\Gamma_N$ | Specification of gradient (flux) | Heat flux, force |

| Robin Condition | $\alpha u + \beta \partial u/\partial n = h$ | Linear combination of variable and gradient | Convective heat transfer |

| Periodic Boundary Condition | $u(x) = u(x+L)$ | Spatial periodicity | Unit cell analysis |

Choosing appropriate boundary conditions is directly linked to solution uniqueness and physical validity. Insufficient boundary conditions lead to an ill-posed problem, while excessive boundary conditions create contradictions.

I've grasped the overall picture of the mesh quality prediction model! I'll try to be mindful of it in my practical work starting tomorrow.

Yeah, you're on the right track! Actually getting your hands dirty is the best way to learn. If you have any questions, feel free to ask anytime.

The "Physical Meaning" of Mesh Quality Metrics — Why Aspect Ratio Ruins Solution Accuracy

Mesh quality metrics (aspect ratio, Jacobian, skewness, etc.) are numerical values theoretically derived from finite element method error analysis. For example, when the aspect ratio (longest side/shortest side) becomes large, the element's shape function becomes biased in a specific direction, worsening the condition number of the stiffness matrix and making the solution of the simultaneous equations unstable. Theoretically, an aspect ratio ≤ 3 is recommended for many solvers, but in boundary layer meshes (near walls in fluid analysis), aspect ratios of 100 or more are intentionally allowed — because if the flow is physically parallel to the wall, the problem degenerates and computation succeeds even with low quality. When ML discriminates "poor-quality meshes," whether it can learn such "physical context-dependence" tests the true value of the algorithm.

Physical Meaning of Each Term

- Time Variation Term of Conserved Quantity: Represents the rate of change over time of the target physical quantity. Becomes zero for steady-state problems. 【Image】When filling a bathtub with hot water, the water level rises over time — this "rate of change per time" is the time variation term. The state where the valve is closed and the water level is constant is "steady," and the time variation term is zero.

- Flux Term (Flow Term): Describes the spatial transport/diffusion of a physical quantity. Broadly classified into convection and diffusion. 【Image】Convection is like "a river's flow carrying a boat," where things are carried by the flow. Diffusion is like "ink naturally spreading in still water," where things move due to concentration differences. The competition between these two transport mechanisms governs many physical phenomena.

- Source Term (Generation/Destruction Term): Represents the local generation or destruction of a physical quantity due to external forces/reactions. 【Image】When you turn on a heater in a room, thermal energy is "generated" at that location. When fuel is consumed in a chemical reaction, mass is "destroyed." A term representing physical quantities injected into the system from the outside.

Assumption Conditions and Application Limits

- The spatial scale is such that the continuum assumption holds.

- The constitutive laws of materials/fluids (stress-strain relation, Newtonian fluid law, etc.) are within their applicable range.

- Boundary conditions are physically valid and mathematically well-defined.

Dimensional Analysis and Unit Systems

| Variable | SI Unit | Notes / Conversion Memo |

|---|---|---|

| Characteristic Length $L$ | m | Must match the unit system of the CAD model. |

| Characteristic Time $t$ | s | For transient analysis, time step should consider CFL condition and physical time constant. |

Numerical Methods and Implementation

Explains numerical methods and algorithms for implementing the mesh quality prediction model.

Now I understand what my senior meant when they said, "Make sure you do the mesh quality prediction model properly."

Discretization and Calculation Procedure

How do you actually solve this equation on a computer?

As data preprocessing, normalization/standardization of input features is important. Since CAE data have vastly different scales for each physical quantity, it's necessary to appropriately choose methods like Min-Max normalization or Z-score normalization. In selecting the learning algorithm, choose an appropriate method according to data volume, dimensionality, and degree of nonlinearity.

Implementation Considerations

What is the most important thing to be careful about when using the mesh quality prediction model in practical work?

Implementation using the Python ecosystem (scikit-learn, PyTorch, TensorFlow) is common. Keys to implementation are learning acceleration via GPU parallelization, automatic hyperparameter tuning, and preventing overfitting through cross-validation. Using the HDF5 format is recommended for efficient I/O processing of large-scale CAE data.

Verification Methods

Professor, please tell me about "Verification Methods"!

It's important to use k-fold cross-validation, Leave-One-Out method, and holdout method appropriately for the purpose, and to evaluate prediction performance multidimensionally using coefficient of determination R², RMSE, MAE, and maximum error.

Now I understand what my senior meant when they said, "Make sure you do cross-validation properly."

Code Quality and Reproducibility

What is the most important thing to be careful about when using the mesh quality prediction model in practical work?

Ensure code quality and experiment reproducibility by introducing version control (Git), automated testing (pytest), and CI/CD pipelines. Strictly enforce dependency library version pinning (requirements.txt) to make rebuilding the computational environment easy. Fixing random seeds to ensure result reproducibility is also an important implementation practice.

Ah, I see! So that's how version control works.

Details of Implementation Algorithms

Related Topics

なった

詳しく

報告