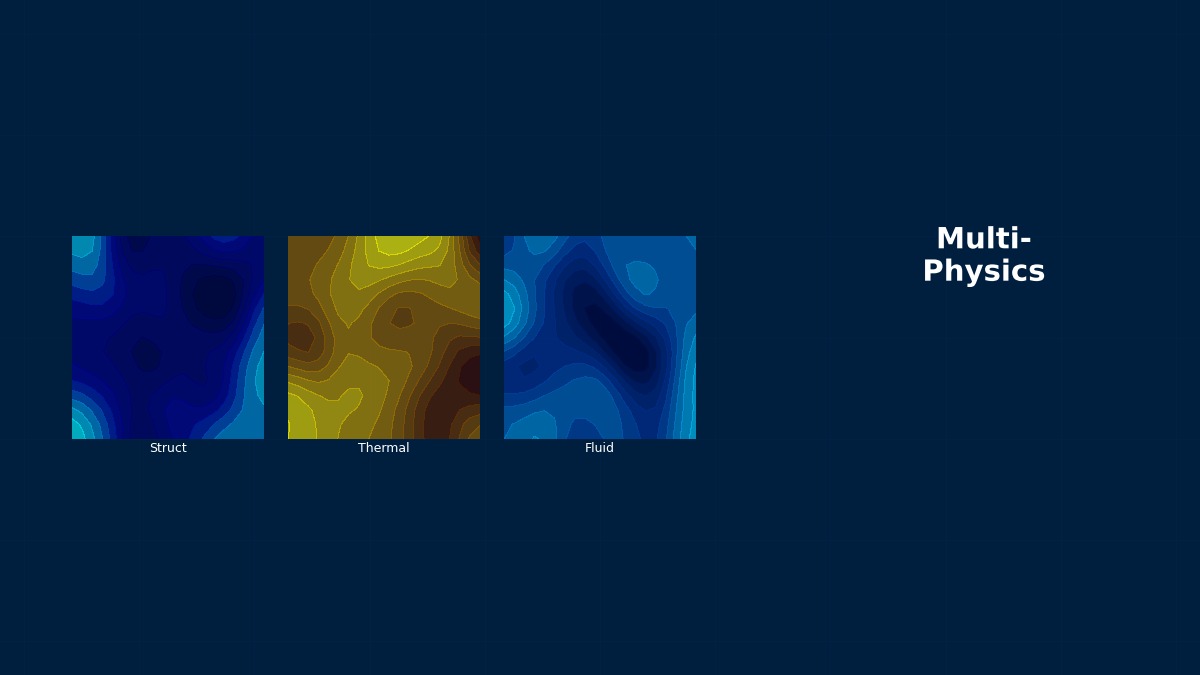

Data-Driven Multiphysics

Theory and Physics

Overview — Why Data-Driven is Needed

Professor, does data-driven multiphysics essentially mean solving physics with AI?

Half right, half wrong. The key point isn't "replacing the entire physics simulation with AI," but rather "letting AI approximate in situations where physics simulations are too slow."

Too slow? How slow specifically?

For example, a single automotive crash analysis case takes 8 to 12 hours. If you try to simultaneously optimize that with NVH (vibration/noise) and durability analysis, you need to couple three physics and run thousands of cases. Full FEM would take years to compute.

Years! That wouldn't meet product development deadlines...

Exactly. That's why the data-driven approach emerged. Broadly speaking, there are two main approaches:

- (1) Surrogate Model — Use full FEM/CFD calculation results as training data to approximate the input-output relationship with neural networks or Gaussian Processes (GP). After training, predictions are possible in seconds per case.

- (2) PINN (Physics-Informed Neural Network) — Incorporate physical laws (residuals of governing equations) into the loss function, enabling physically consistent predictions even with limited data.

Where is this actually being used?

In multi-objective optimization for automotive OEMs involving crash-NVH-durability, GP-based surrogates have been in practical use since around 2020. For thermal-structural coupling in aero-engine turbine blades, NASA and Rolls-Royce are using multi-fidelity surrogates.

Basics of Surrogate Models

So, a surrogate model is essentially an "approximation formula"? How is it different from Response Surface Methodology (RSM)?

Good question. RSM approximates with a 2nd-order polynomial, so its accuracy is insufficient when the input-output relationship is complex and nonlinear. Surrogate models are a general term for "proxy models" that approximate full simulation input-output with more flexible functions.

The general formulation of a surrogate model is as follows. For input parameters $\mathbf{x} \in \mathbb{R}^d$ (design variables, boundary conditions), we build a model $\hat{f}(\mathbf{x})$ that approximates the output $\mathbf{y} = f(\mathbf{x})$ of a high-fidelity simulation:

Comparison of major surrogate modeling techniques:

| Method | Characteristics | Uncertainty Estimation | Training Data Amount | Application Scenarios |

|---|---|---|---|---|

| Gaussian Process Regression (GPR/Kriging) | Expresses correlation via kernel function | Naturally obtained | Small (50~500) | Design optimization, active learning |

| Neural Network (DNN) | Strong for high-dimensional input/output | MC dropout, etc. | Medium~Large (1000+) | Image-like field prediction |

| RBF (Radial Basis Function) | Easy to implement | None | Small~Medium | Smooth responses |

| Random Forest / XGBoost | Robust, interpretable | Ensemble variance | Medium | Mixed classification/regression problems |

Gaussian Process Regression (GPR)

The name "Gaussian Process" sounds difficult already... What is it intuitively?

Roughly speaking, it's an "infinite-dimensional normal distribution." It draws a smooth curve passing through known data points while telling you "I'm not confident here" with uncertainty in areas without data. This uncertainty is extremely useful in active learning.

In GPR, for observed data $\mathcal{D} = \{(\mathbf{x}_i, y_i)\}_{i=1}^n$, the predictive mean $\mu_*$ and variance $\sigma_*^2$ of the posterior distribution are obtained in closed form:

Here, $K$ is the kernel matrix ($K_{ij} = k(\mathbf{x}_i, \mathbf{x}_j)$), $\mathbf{k}_*$ is the kernel vector between a new input point and the training data, and $\sigma_n^2$ is the observation noise variance.

Which kernel function should I choose?

Since CAE responses are usually smooth, the Matern-5/2 kernel or RBF (squared exponential) kernel are standard choices. Using an anisotropic kernel (ARD: Automatic Relevance Determination) allows automatic estimation of the importance of each design variable.

Kernel Function Selection Guidelines

| Kernel | Formula | Smoothness | Use Case |

|---|---|---|---|

| RBF (SE) | $k(r) = \sigma_f^2 \exp\left(-\frac{r^2}{2l^2}\right)$ | Infinitely differentiable | Very smooth responses |

| Matern-5/2 | $k(r) = \sigma_f^2 \left(1 + \frac{\sqrt{5}r}{l} + \frac{5r^2}{3l^2}\right)\exp\left(-\frac{\sqrt{5}r}{l}\right)$ | Twice differentiable | Standard choice for CAE |

| Matern-3/2 | $k(r) = \sigma_f^2 \left(1 + \frac{\sqrt{3}r}{l}\right)\exp\left(-\frac{\sqrt{3}r}{l}\right)$ | Once differentiable | Slightly rough responses |

$r = \|\mathbf{x} - \mathbf{x}'\|$, $l$ is the length scale, $\sigma_f^2$ is the signal variance. Hyperparameters are determined by maximizing the log marginal likelihood.

PINN — Physics-Informed Neural Network

Is PINN a completely different approach from surrogates?

Fundamentally different. Surrogates learn from "teacher data which is simulation results." PINN incorporates "the governing equations themselves" into the loss function, so it can potentially produce physically correct solutions even with little teacher data—in extreme cases, even zero.

The PINN loss function is a weighted sum of a data consistency term and a physical law (PDE residual) term:

Here, $\mathcal{N}[\cdot]$ is the differential operator of the governing equation, and $\mathcal{B}[\cdot]$ is the boundary condition operator. Automatic differentiation allows analytical computation of partial derivatives of the network output $\hat{u}$, so a mesh is unnecessary.

No mesh needed? That sounds very attractive... Are there no drawbacks?

To be honest, there are many drawbacks. First, tuning the weight coefficients $\lambda_r$ and $\lambda_b$ is critical; poor settings can lead to no convergence at all. In multiphysics, scales differ per physical field (temperature in hundreds of K, stress in hundreds of MPa), making balancing loss terms particularly difficult. Also, they often struggle with high-frequency vibrations or solutions with steep gradients, and in many cases, they haven't yet reached FEM accuracy.

So what are PINN's strong suits?

Inverse problems are its strength. "Identifying material parameters from observed data," "complementing missing experimental data with physical laws"—PINN excels in these scenarios. Also, it's effective for complex geometries where governing equations are known but mesh generation is difficult.

DeepONet — Operator Learning

I also hear the term DeepONet a lot lately. How is it different from PINN?

PINN learns "the solution to one specific problem." DeepONet learns "the mapping (operator) from an input function to an output function." For example, a mapping like "input any boundary condition, output the temperature field." Once learned, it can instantly predict the field for new boundary conditions.

The DeepONet structure is expressed as the product of a Branch Net (encodes the input function) and a Trunk Net (encodes the output location):

Here, $u$ is the input function (boundary/initial conditions), $y$ is the output evaluation point, $b_k$ is the Branch Net output, and $t_k$ is the Trunk Net output. Fourier Neural Operator (FNO) is also an operator learning method, but it uses Fourier transforms to efficiently capture spatial periodic structures.

The "Universal Surrogate" Does Not Exist — The Lesson of the No Free Lunch Theorem

In the world of data-driven modeling, the No Free Lunch theorem, which states "there is no single optimal method for all problems," is dominant. GPR is strongest for low-dimensional (~20 variables) smooth responses, but struggles with scalability beyond 100 dimensions. DNNs are strong in high dimensions but overfit with little training data. PINNs can embed physical laws but require artisanal hyperparameter tuning. In practice, "choosing the method according to the nature of the problem"—this is the fundamental stance of data-driven multiphysics.

Numerical Methods and Implementation

Surrogate Construction Workflow

Please tell me the steps to actually build a surrogate model.

Five steps:

- Problem Definition — Determine input variables (design parameters, material properties, load conditions) and output quantities (max stress, natural frequency, temperature, etc.)

- DOE (Design of Experiments) — Generate initial sample points using Latin Hypercube Sampling (LHS) or Sobol sequences. A guideline is $10d$ to $20d$ points for dimension $d$.

- Execute High-Fidelity Simulations — Run FEM/CFD calculations for all DOE points and acquire training data.

- Model Training — Construct the surrogate using GPR or DNN. Evaluate accuracy via cross-validation.

- Validation / Active Learning Loop — Check RMSE/R² with validation data; if accuracy is insufficient, acquire additional samples via active learning.

What is LHS in step 2? Isn't random sampling okay?

With random sampling, "samples might coincidentally cluster in similar areas." LHS (Latin Hypercube Sampling) divides each variable's range into $n$ equal intervals and ensures exactly one point is taken from each interval. As a result, it uniformly covers the design space. For expensive simulations, wasting even one point is critical, so this is an essential technique.

Active Learning DOE

Does active learning mean AI tells us "where to compute next"?

Exactly. It uses the GPR predictive variance $\sigma_*^2(\mathbf{x})$ to automatically judge "this region has high uncertainty, so additional samples are needed." The Acquisition Function in Bayesian Optimization does precisely this.

Representative acquisition functions are shown below:

Here, $z = (f_{\min} - \mu_*)/\sigma_*$, $\Phi$ and $\phi$ are the CDF and PDF of the standard normal distribution. EI (Expected Improvement) is the expected value of "how much the current best value can be improved," automatically balancing exploration and exploitation.

| Acquisition Function | Abbreviation | Characteristics | Use Case in Multiphysics |

|---|---|---|---|

| Expected Improvement | EI | Good balance of exploration/exploitation | Standard choice for single-objective optimization |

| Lower Confidence Bound | LCB | Exploration degree controlled by parameter $\kappa$ | Constrained optimization |

| Knowledge Gradient | KG | Maximizes information value | Simulations with large noise |

| Expected HyperVolume Improvement | EHVI | Improvement amount of Pareto front | Multi-objective (e.g., crash-NVH) |

How much can active learning reduce computational cost?

According to papers and practical reports, compared to random DOE, it can often reduce the number of samples needed to achieve the same accuracy by 50-80%. There are actual cases in automotive crash optimization where "a surrogate with sufficient accuracy was built with only 150 full FEM cases." That problem would have required over 500 cases with random DOE.

Transfer Learning

I have a strong image of transfer learning in image recognition. Can it be used in CAE too?

It's incredibly useful. The concept is simple: "use a model trained on a similar problem as the initial value for a new problem." For example, transfer a crash response surrogate learned for one car model's B-pillar to a new B-pillar after a minor change. Since the shapes are similar, a high-accuracy surrogate can be obtained with a small amount of fine-tuning.

Can it transfer even if the physics changes? Like from structural analysis to thermal analysis?

That's transfer between different physical fields, so it's a more advanced topic. But in the context of PINN, there is research on transferring the lower layers (feature extraction layers) of a network trained on a 2D problem to a 3D problem. The lower layers learn generic features like "spatial patterns of gradients," so parts of them can be useful even if the physics changes somewhat.

Multi-Fidelity Modeling

Does "multi-fidelity" mean combining coarse and fine meshes?

Exactly. It's an approach that uses many cheap but inaccurate calculations (Low-Fidelity: coarse-mesh FEM, simplified models, etc.) and a few expensive but accurate calculations (High-Fidelity: fine-mesh FEM), integrating both.

The basic model for Co-Kriging (multi-fidelity Gaussian Process) is:

Here, $f_{\text{HF}}$ is the high-fidelity output, $f_{\text{LF}}$ is the low-fidelity output, $\rho$ is a scaling coefficient, and $\delta(\mathbf{x})$ is a GP representing the difference. Even with little high-fidelity data, it enables accurate predictions by leveraging trends from low-fidelity data.

What is concretely used as Low-Fidelity in practice?

Let me list a few common patterns:

- Coarse-mesh FEM (

Related Topics

なった

詳しく

報告